Internal Design of Convert CSC CSR¶

Overview¶

ConvertCSCCSR is an algorithm used to transform CSC format input to CSR format input or CSR format input to CSC format input. The algorithm is quite easy, but due to DDR limits, we cannot get one data per clock, so in our design, we use several caches with depth ajustable to get much better performance.

Algorithm¶

ConvertCSCCSR algorithm implementation:

for each edge (u, v) in graph // calculate du degree

degree(v) += 1

offset2(v) += 1

begin = 0

for node u in graph

end = offset1(u)

for i in (begin, end)

index = indice1(i)

index2 = offset2[index]

offset2[index] += 1

indice2[index2] = u

begin = end

Implemention¶

The input matrix should ensure that the following conditions hold:

- No duplicate edges

- compressed sparse column/row (CSC/CSR) format

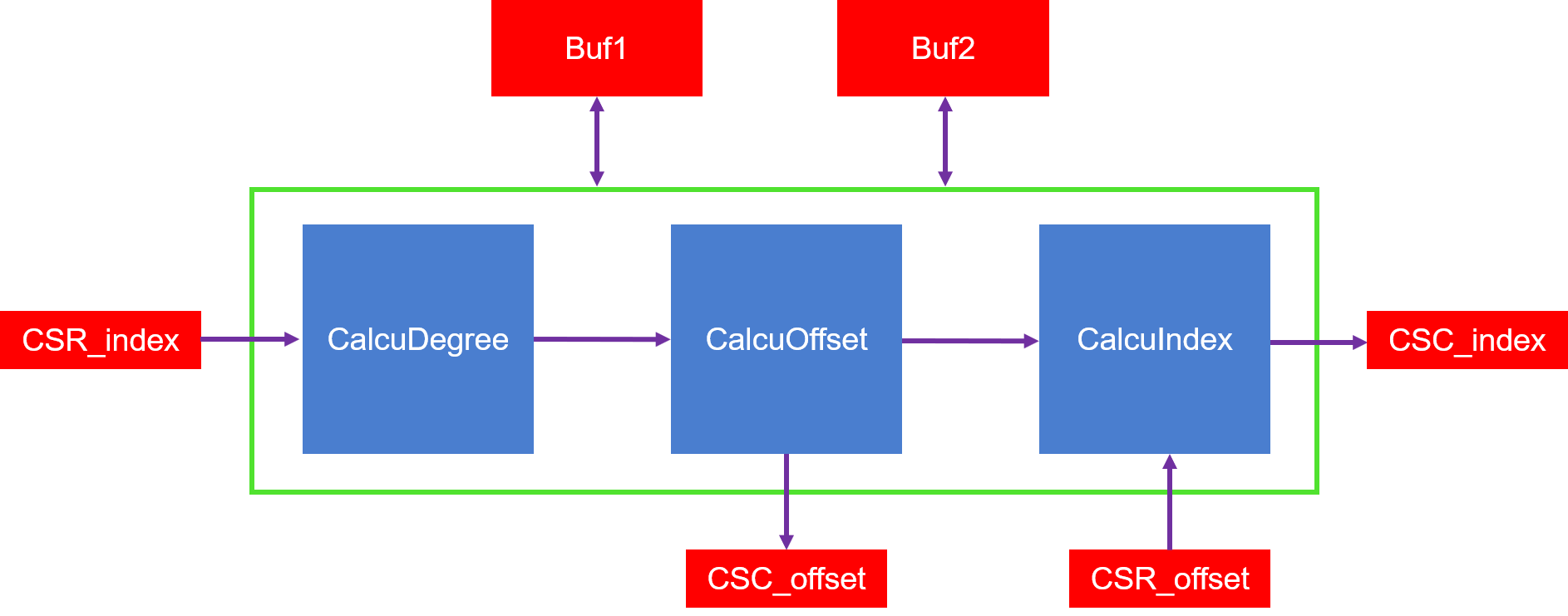

The algorithm implemention is shown as the figure below:

Figure 1 : convert CSC CSR architecture on FPGA

As we can see from the figure:

- firstly call the Module calculate degree to generate the transfer offset array.

- by using the input offset and indice arrays and also the calculated new offset array, generate the new indice array

Profiling¶

The hardware resource utilizations are listed in the following table.

Table 1 : Hardware resources for ConvertCSCCSR with cache

| Kernel | BRAM | URAM | DSP | FF | LUT | Frequency(MHz) |

| kernel_pagerank_0 | 413 | 0 | 7 | 295330 | 207754 | 300 |

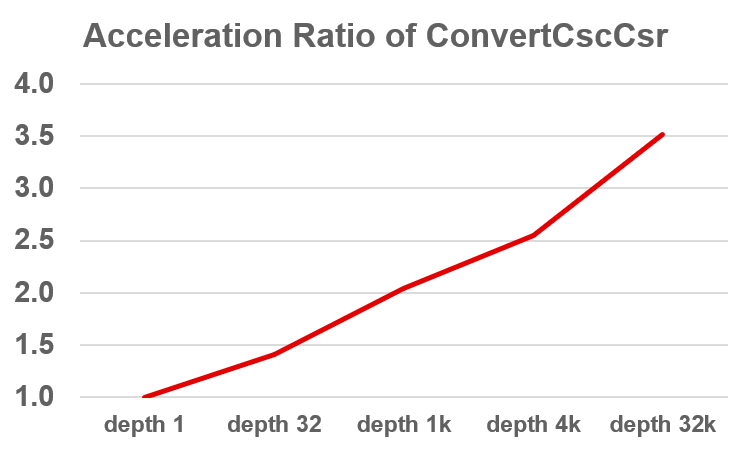

Figure 2 : Cache depth’s influence to ConvertCSCCSR acceleration

Note

1. depth 1, depth 32, depth 1k, they use LUTRAM only, in compare with resources of depth 1, only LUT and FF changes.

2. depth 4k, depth 32k, they use URAM, in compare with resources of depth 1, the URAM utilization will be the major difference.

3. HW Frequency: depth 1 (300MHz), depth 32 (300MHz), depth 1k (275.6MHz), depth 4k (300MHz), depth 32k (275.7MHz)