Kria™ KR260 Robotics Starter Kit |

Machine Vision Camera Tutorial |

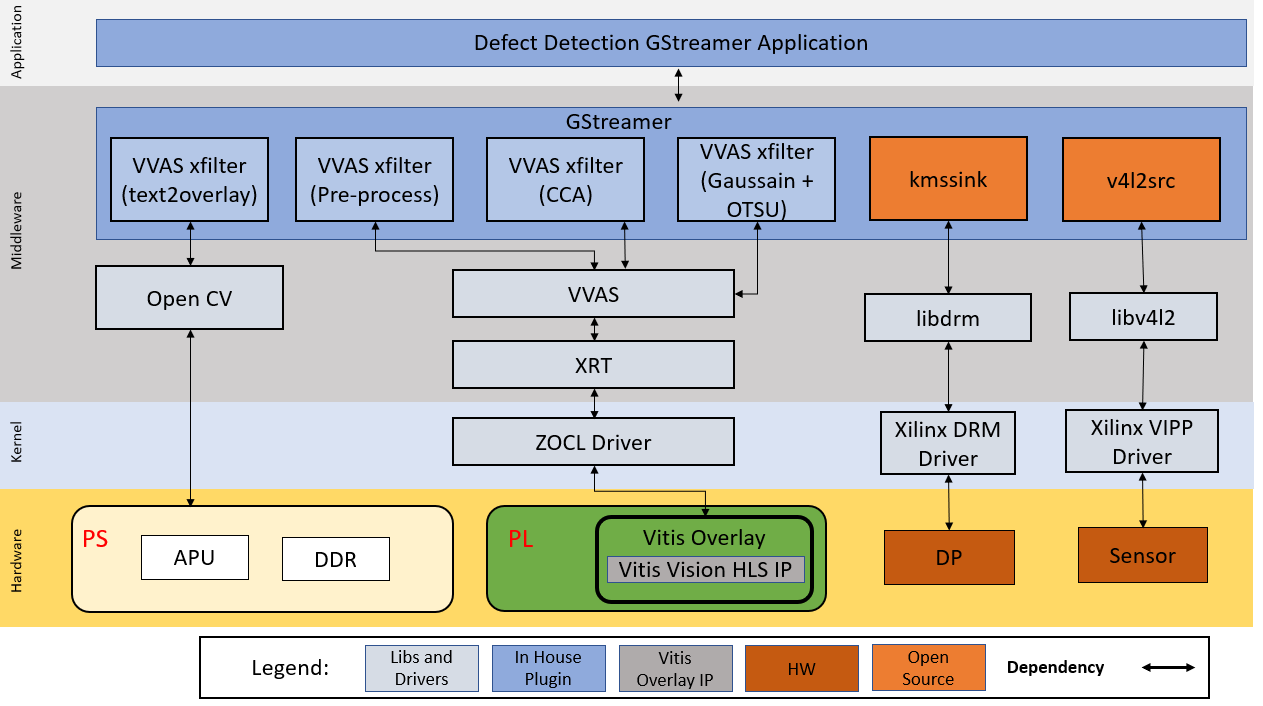

Software Architecture of the Platform¶

Architecture Diagram¶

The following figure illustrates the system architecture of the Kria KR260 MV-Defect-Detect application.

Deployment¶

This reference design is provided as prebuilt docker image to provide a user-friendly out-of-the-box experience. In addition to the docker image, the SW application source files are also provided to allow customization.

The end applications leverages custom Gstreamer plugins for Vitis Vision libraries built using the VVAS framework. These plugins are mapped to the following libraries:

| Binary Artifact | Type | Description |

|---|---|---|

| libvvas_otsu.so | Kernel Library | Vitis Vision library for the Gaussian + OTSU detector. Preserves edges while smoothening and calculates the optimum threshold between foreground and background pixels. |

| libvvas_preprocess.so | Kernel Library | Vitis Vision library to filter and remove the salt and pepper noise for defect detection. |

| libvvas_cca.so | Kernel Library | Vitis Vision library to determine the defective pixels in the image. |

| libvvas_text2overlay.so | Kernel Library | OpenCV software library to calculate the defect density, determine the quality of the mango, and embed text as result into output images. |

| mv-defect-detect | Application Executable | Executable to invoke the whole application with options to choose a source, width, height, framerate, configuration file path, and other parameters. |

Application Overview¶

The 1080p Monitor displays one of the following outputs:

Input Image (generated by file or live source, can be gray or RGB format)

Binary Image (output of the pre-process kernel where Vitis Vision library threshold function performs the thresholding operation on the input image and the Median blur filter acts as a non-linear digital filter that improves noise reduction)

text2overlay Image (output of the cca kernel with text overlay showing defected portions along with the defect results)

The text2overlay image along with its embedded text contains:

Defect Density (amount of defected portion)

Defect Decision (Is Defected: Yes/No)

Accumulated Defects - Results by default will be disabled but can be enabled by setting the is_acc_result to 1 in the text2overlay.json file. To enable, see Software Accelerator.

For a given set of test images, manually calculate accuracy as follows:

Each image in the test is prelabeled by a human. The label indicates if the mango in the image is defected or not.

The prelabeled result of the human is compared with the result given by the MV-Defect Detect system. The number of images that are not detected correctly is found.

The ratio of the number of images that are detected correctly to the total number of images in the test, is the accuracy of the MV-Defect-Detect system.

Accumulated defects are the number of defects detected in a certain period of time.

The input raw image of the mango is as shown in the following figure.

The Pre-processed image of the mango is as shown in the following figure.

The Final output of the mango is as shown in the following figure.

Note: The test mango image is taken from Cofilab site.

The output image to be displayed on the monitor is selected via command line switch when starting the application.

Video Capture Pipeline¶

Media Source Bin GStreamer Plugin¶

The mediasrcbin plugin is designed to simplify the usage of live video capture devices in this design. Otherwise, be careful about the initialization and configuration. The plugin is a bin element that includes the standard v4l2src GStreamer element. It configures the media pipelines of the supported video sources in this design. The v4l2src element inside the mediasrcbin element interfaces with the V4L2 Linux framework and the AMD VIPP driver through the video device node. The mediasrcbin element interfaces with the Media Controller Linux framework through the v412-subdev and media device nodes that allows to configure the media pipeline and its sub-devices. It uses the libmediactl and libv4l2subdev libraries, which provide the following functionality:

Enumerate entities, pads and links

Configure sub-devices

Set media bus format

Set dimensions (width/height)

Set frame rate

Export sub-device controls

The mediasrcbin plugin sets the media bus format and resolution on each sub-device source and sink pad for the entire media pipeline. The formats between pads that are connected through links need to match. Refer to the Media Framework section for more information on entities, pads, and links.

Kernel Subsystems¶

To model and control video capture pipelines such as the ones used in this application on Linux systems, multiple kernel frameworks and APIs are required to work in concert. For simplicity, refer to the overall solution as Video4Linux (V4L2) although the framework only provides part of the required functionality. The individual components are discussed in the following sections.

Driver Architecture¶

The Video Capture Software Stack figure in the Capture section shows how the generic V4L2 driver model of a video pipeline is mapped to the single-sensor capture pipelines. The video pipeline driver loads the necessary sub-device drivers and registers the device nodes it needs, based on the video pipeline configuration specified in the device tree. The framework exposes the following device node types to user space to control certain aspects of the pipeline:

Media device node:

/dev/media*Video device node:

/dev/video*V4L2 sub-device node:

/dev/v4l-subdev*

Media Framework¶

The main goal of the media framework is to discover the device topology of a video pipeline and to configure it at runtime. To achieve this, the pipelines are modeled as an oriented graph of building blocks called entities connected through pads. An entity is a basic media hardware building block. It can correspond to a large variety of blocks such as physical hardware devices (for example, image sensors), logical hardware devices (for example, soft IP cores inside the PL), DMA channels, or physical connectors. Physical or logical devices are modeled as sub-device nodes and DMA channels as video nodes. A pad is a connection endpoint through which an entity can interact with other entities. Data produced by an entity flows from the entity’s output to one or more entity inputs. A link is a point-to-point-oriented connection between two pads, either on the same entity or on different entities. Data flows from a source pad to a sink pad. A media device node is created that allows the user space application to configure the video pipeline and its sub-devices through the libmediactl and libv4l2subdev libraries. The media controller API provides the following functionality:

Enumerate entities, pads and links

Configure pads

Set media bus format

Set dimensions (width/height)

Configure links

Enable/disable

Validate formats

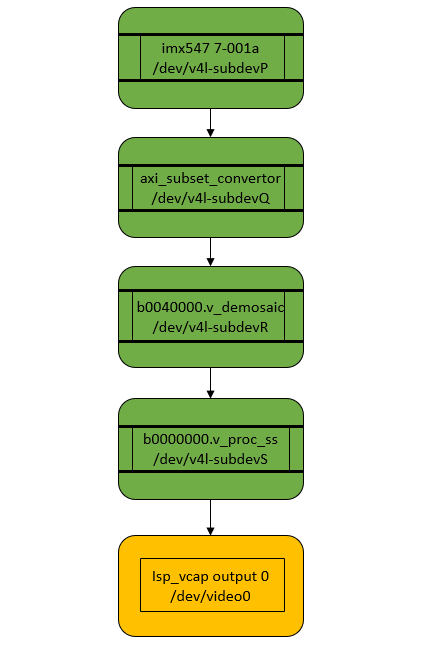

The following figure shows the media graph for video capture pipeline as generated by the media-ctl utility. The sub-devices are shown in green with their corresponding control interface base address and sub-device node in the center. The numbers on the edges are pads and the solid arrows represent active links. The yellow boxes are video nodes that correspond to DMA channels, in this case, write channels (outputs).

Video IP Drivers¶

AMD adopted the V4L2 framework for most of its video IP portfolio. The currently supported video IPs and corresponding drivers are listed under V4L2. Each V4L driver has a sub-page that lists driver-specific details and provides pointers to the additional documentation. The following table provides a quick overview of the drivers used in this design.

| Linux Driver | Function |

|---|---|

| Xilinx Video Pipeline (XVIPP) | * Configures video pipeline and register media, video, and sub-device nodes |

| * Configures all entities in the pipeline and validate links | |

| * Configures and controls DMA engines (Xilinx Video Framebuffer Write) | |

| Vitis Vision ISP Pipeline | * Supports both color and mono sensor pipeline variants |

| * Sets the media bus format and resolution for ISP input and output pads | |

| * Provides ISP control parameters for setting: gamma, gain, contrast, and so on. | |

| IMX547 Image Sensor | * Configure sensor pad paramaters like format and resolution. |

| * Provide sensor controls like exposure and gain |

Display Pipeline¶

Overview¶

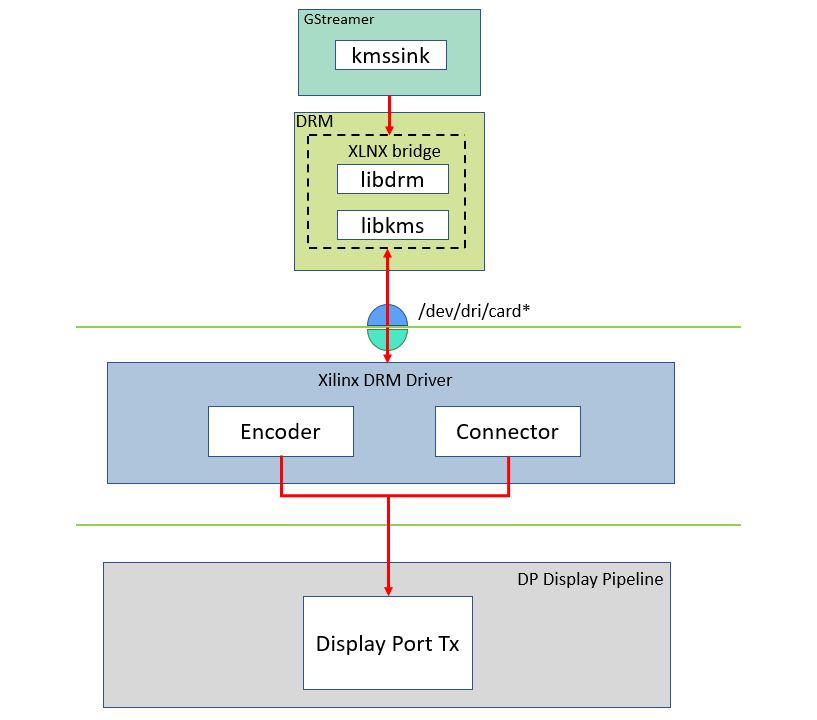

Linux kernel and user-space frameworks for display and graphics are intertwined and the software stack can be quite complex with many layers and different standards / APIs. On the kernel side, the display and graphics portions are split with each having their own APIs. However, both are commonly referred to as a single framework, namely DRM/KMS. This split is advantageous, especially for SoCs that often have dedicated hardware blocks for display and graphics. The display pipeline driver is responsible for interfacing with the display uses the kernel mode setting (KMS) API and the GPU responsible for drawing objects into memory uses the direct rendering manager (DRM) API. Both APIs are accessed from user-space through a single device node.

Direct Rendering Manager¶

The Direct Rendering Manager (DRM) is a subsystem of the Linux kernel responsible for interfacing with a GPU. DRM exposes an API that user space programs can use to send commands and data to the GPU. The ARM Mali driver uses a proprietary driver stack that is discussed in the next section. Therefore, this section focuses on the common infrastructure portion around memory allocation and management that is shared with the KMS API.

Driver Features¶

The AMD DRM driver uses the GEM memory manager and implements DRM PRIME buffer sharing. PRIME is the cross-device buffer sharing framework in DRM. To user-space, PRIME buffers are DMABUF-based file descriptors. The DRM GEM/CMA helpers use the CMA allocator as a means to provide buffer objects that are physically contiguous in memory. This is useful for display drivers that are unable to map scattered buffers via an IOMMU. Frame buffers are abstract memory objects that provide a source of pixels to scan out to a CRTC. Applications explicitly request the creation of frame buffers through the DRM_IOCTL_MODE_ADDFB(2) ioctls and receive an opaque handle that can be passed to the KMS CRTC control, plane configuration and page flip functions.

Kernel Mode Setting¶

Mode setting is an operation that sets the display mode, including video resolution and refresh rate. It was traditionally done in user-space by the X-server that caused several issues due to accessing low-level hardware from user-space, which if done wrong, can lead to system instabilities. The mode setting API was added to the kernel DRM framework; hence, the name Kernel Mode Setting. The KMS API is responsible for handling the frame buffer and planes, setting the mode, and performing page-flips (switching between buffers). The KMS device is modeled as a set of planes, CRTCs, encoders, and connectors as shown in the top half of the DP Tx display. The bottom half of that figure shows how the driver model maps to the physical hardware components inside the PS DP Tx display pipeline.

Encoder¶

An encoder takes pixel data from a CRTC and converts it into a format suitable for any attached connectors. There are many different display protocols defined, such as HDMI or DisplayPort. The PS display pipeline has a built-in DisplayPort transmitter. The encoded video data is then sent to the serial I/O unit (SIOU) which serializes the data using the gigabit transceivers (PS GTRs) before it goes out via the physical DP connector to the display. The PL display pipeline uses a HDMI transmitter, which sends the encoded video data to the Video PHY. The Video PHY serializes the data using the GTH transceivers in the PL before it goes out via the HDMI TX connector.

Connector¶

The connector models the physical interface to the display. Both DisplayPort and HDMI protocols use a query mechanism to receive data about the monitor resolution, and refresh rate by reading the extended display identification data (EDID) (see VESA Standard) stored inside the monitor. This data can then be used to correctly set the CRTC mode. The DisplayPort supports hot-plug events to detect if a cable has been connected or disconnected as well as handling display power management signaling (DPMS) power modes.

Libdrm¶

The framework exposes two device nodes per display pipeline to user space: the /dev/dri/card* device node and an emulated /dev/fb* device node for backward compatibility with the legacy fbdev Linux framework. The latter is not used in this design. libdrm was created to facilitate the interface of user space programs with the DRM subsystem. This library is merely a wrapper that provides a function written in C for every ioctl of the DRM API, as well as constants, structures and other helper elements. The use of libdrm not only avoids exposing the kernel interface directly to user space but presents the usual advantages of reusing and sharing code between programs.

10GigE Vision Pipeline¶

The image acquisition pipeline is completely offloaded to hardware; no software is involved in the streaming path. Software is only used for configuring the system, that means configuring the sensor and the GigE Vision IP. As Linux runs on the ARM system, a specific IMX547 driver is used, programming the sensor via PS I2C.

A second driver (s2imac) integrates the XGigE core as ethX to the Linux system and binds standard Linux network services to that interface. A software application (gvrd) initializes the hardware and runs the GigE Vision control channel.

According to the GigE vision specification, the device registers are described in the xml file. The host application requests this xml file from the device and creates a register tree. The registers on the device can then be read and written via the control channel. Hardware register accesses are implemented through calls to the s2imac network driver. Furthermore, the device software is responsible for configuring the GenICam GenDC descriptor.

Next Steps¶

Go back to the Hardware Architecture of the Platform

Go back to the Application Deployment

Copyright © 2023–2024 Advanced Micro Devices, Inc.